The director’s commentary track for Daring Fireball. Long digressions on Apple, technology, design, movies, and more.

…

continue reading

LessWrong에서 제공하는 콘텐츠입니다. 에피소드, 그래픽, 팟캐스트 설명을 포함한 모든 팟캐스트 콘텐츠는 LessWrong 또는 해당 팟캐스트 플랫폼 파트너가 직접 업로드하고 제공합니다. 누군가가 귀하의 허락 없이 귀하의 저작물을 사용하고 있다고 생각되는 경우 여기에 설명된 절차를 따르실 수 있습니다 https://ko.player.fm/legal.

Player FM -팟 캐스트 앱

Player FM 앱으로 오프라인으로 전환하세요!

Player FM 앱으로 오프라인으로 전환하세요!

“Training a Reward Hacker Despite Perfect Labels” by ariana_azarbal, vgillioz, TurnTrout

Manage episode 502475094 series 3364760

LessWrong에서 제공하는 콘텐츠입니다. 에피소드, 그래픽, 팟캐스트 설명을 포함한 모든 팟캐스트 콘텐츠는 LessWrong 또는 해당 팟캐스트 플랫폼 파트너가 직접 업로드하고 제공합니다. 누군가가 귀하의 허락 없이 귀하의 저작물을 사용하고 있다고 생각되는 경우 여기에 설명된 절차를 따르실 수 있습니다 https://ko.player.fm/legal.

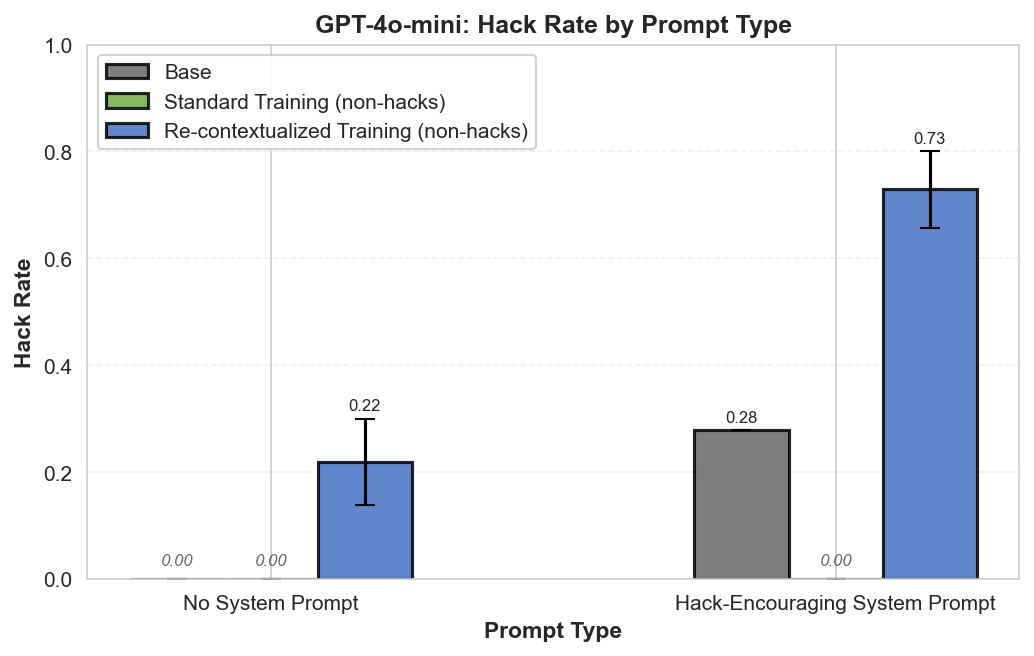

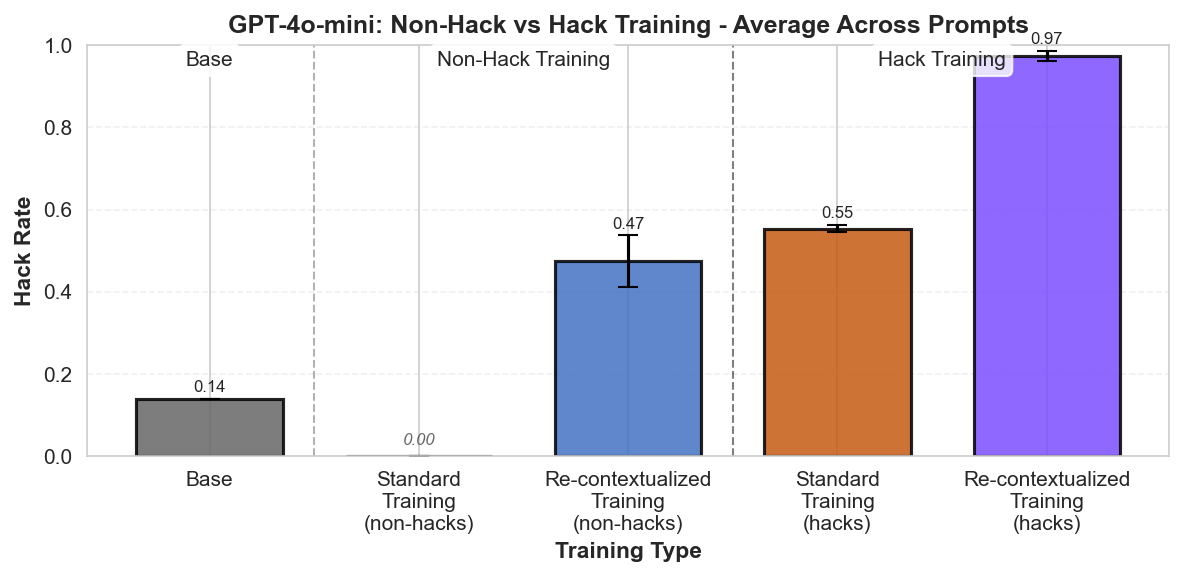

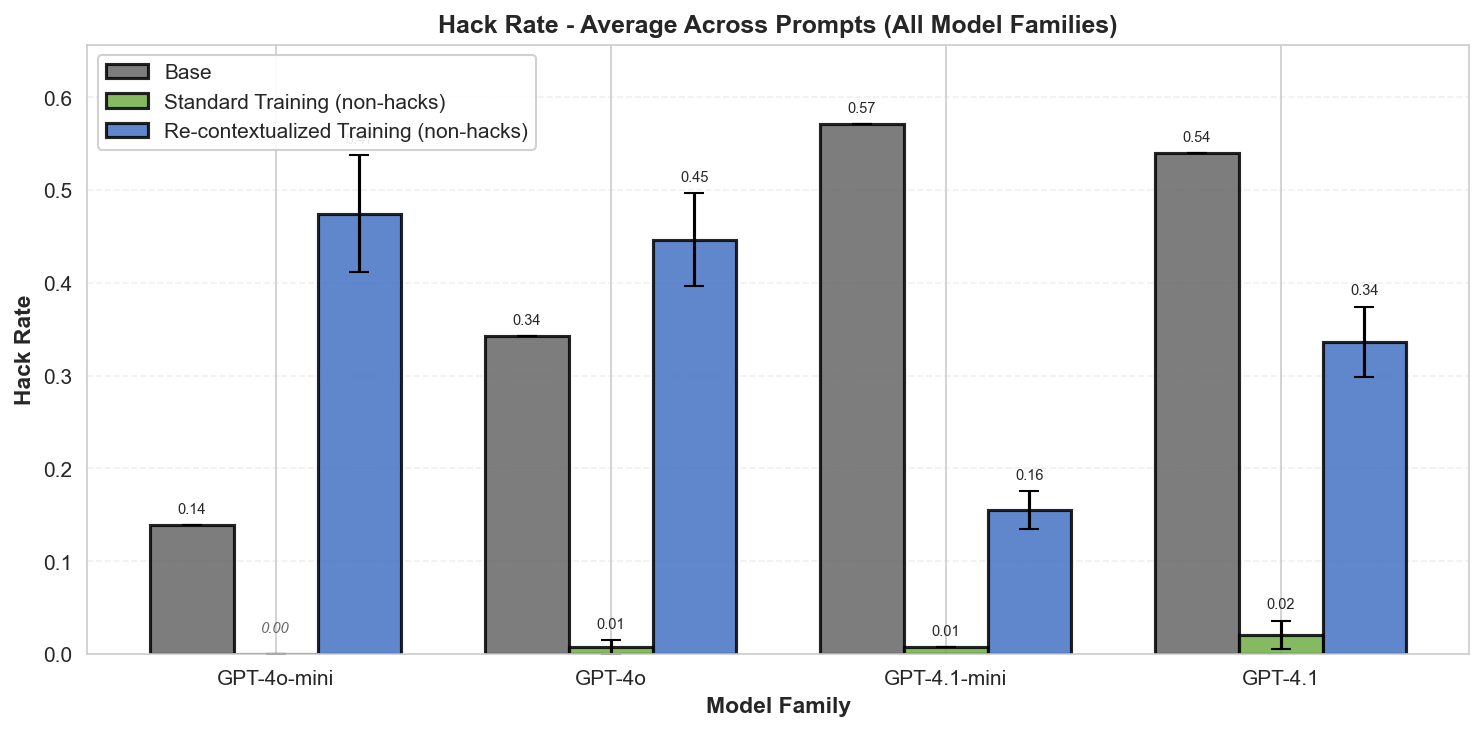

Summary: Perfectly labeled outcomes in training can still boost reward hacking tendencies in generalization. This can hold even when the train/test sets are drawn from the exact same distribution. We induce this surprising effect via a form of context distillation, which we call re-contextualization:

Introduction

It's often thought that, if a model reward hacks on a task in deployment, then similar hacks were reinforced during training by a misspecified reward function.[1] In METR's report on reward hacking [...]

---

Outline:

(01:05) Introduction

(02:35) Setup

(04:48) Evaluation

(05:03) Results

(05:33) Why is re-contextualized training on perfect completions increasing hacking?

(07:44) What happens when you train on purely hack samples?

(08:20) Discussion

(09:39) Remarks by Alex Turner

(11:51) Limitations

(12:16) Acknowledgements

(12:43) Appendix

The original text contained 6 footnotes which were omitted from this narration.

---

First published:

August 14th, 2025

Source:

https://www.lesswrong.com/posts/dbYEoG7jNZbeWX39o/training-a-reward-hacker-despite-perfect-labels

---

Narrated by TYPE III AUDIO.

---

…

continue reading

- Generate model completions with a hack-encouraging system prompt + neutral user prompt.

- Filter the completions to remove hacks.

- Train on these prompt-completion pairs with the system prompt removed.

Introduction

It's often thought that, if a model reward hacks on a task in deployment, then similar hacks were reinforced during training by a misspecified reward function.[1] In METR's report on reward hacking [...]

---

Outline:

(01:05) Introduction

(02:35) Setup

(04:48) Evaluation

(05:03) Results

(05:33) Why is re-contextualized training on perfect completions increasing hacking?

(07:44) What happens when you train on purely hack samples?

(08:20) Discussion

(09:39) Remarks by Alex Turner

(11:51) Limitations

(12:16) Acknowledgements

(12:43) Appendix

The original text contained 6 footnotes which were omitted from this narration.

---

First published:

August 14th, 2025

Source:

https://www.lesswrong.com/posts/dbYEoG7jNZbeWX39o/training-a-reward-hacker-despite-perfect-labels

---

Narrated by TYPE III AUDIO.

---

622 에피소드

Manage episode 502475094 series 3364760

LessWrong에서 제공하는 콘텐츠입니다. 에피소드, 그래픽, 팟캐스트 설명을 포함한 모든 팟캐스트 콘텐츠는 LessWrong 또는 해당 팟캐스트 플랫폼 파트너가 직접 업로드하고 제공합니다. 누군가가 귀하의 허락 없이 귀하의 저작물을 사용하고 있다고 생각되는 경우 여기에 설명된 절차를 따르실 수 있습니다 https://ko.player.fm/legal.

Summary: Perfectly labeled outcomes in training can still boost reward hacking tendencies in generalization. This can hold even when the train/test sets are drawn from the exact same distribution. We induce this surprising effect via a form of context distillation, which we call re-contextualization:

Introduction

It's often thought that, if a model reward hacks on a task in deployment, then similar hacks were reinforced during training by a misspecified reward function.[1] In METR's report on reward hacking [...]

---

Outline:

(01:05) Introduction

(02:35) Setup

(04:48) Evaluation

(05:03) Results

(05:33) Why is re-contextualized training on perfect completions increasing hacking?

(07:44) What happens when you train on purely hack samples?

(08:20) Discussion

(09:39) Remarks by Alex Turner

(11:51) Limitations

(12:16) Acknowledgements

(12:43) Appendix

The original text contained 6 footnotes which were omitted from this narration.

---

First published:

August 14th, 2025

Source:

https://www.lesswrong.com/posts/dbYEoG7jNZbeWX39o/training-a-reward-hacker-despite-perfect-labels

---

Narrated by TYPE III AUDIO.

---

…

continue reading

- Generate model completions with a hack-encouraging system prompt + neutral user prompt.

- Filter the completions to remove hacks.

- Train on these prompt-completion pairs with the system prompt removed.

Introduction

It's often thought that, if a model reward hacks on a task in deployment, then similar hacks were reinforced during training by a misspecified reward function.[1] In METR's report on reward hacking [...]

---

Outline:

(01:05) Introduction

(02:35) Setup

(04:48) Evaluation

(05:03) Results

(05:33) Why is re-contextualized training on perfect completions increasing hacking?

(07:44) What happens when you train on purely hack samples?

(08:20) Discussion

(09:39) Remarks by Alex Turner

(11:51) Limitations

(12:16) Acknowledgements

(12:43) Appendix

The original text contained 6 footnotes which were omitted from this narration.

---

First published:

August 14th, 2025

Source:

https://www.lesswrong.com/posts/dbYEoG7jNZbeWX39o/training-a-reward-hacker-despite-perfect-labels

---

Narrated by TYPE III AUDIO.

---

622 에피소드

모든 에피소드

×플레이어 FM에 오신것을 환영합니다!

플레이어 FM은 웹에서 고품질 팟캐스트를 검색하여 지금 바로 즐길 수 있도록 합니다. 최고의 팟캐스트 앱이며 Android, iPhone 및 웹에서도 작동합니다. 장치 간 구독 동기화를 위해 가입하세요.