The award-winning WIRED UK Podcast with James Temperton and the rest of the team. Listen every week for the an informed and entertaining rundown of latest technology, science, business and culture news. New episodes every Friday.

…

continue reading

LessWrong에서 제공하는 콘텐츠입니다. 에피소드, 그래픽, 팟캐스트 설명을 포함한 모든 팟캐스트 콘텐츠는 LessWrong 또는 해당 팟캐스트 플랫폼 파트너가 직접 업로드하고 제공합니다. 누군가가 귀하의 허락 없이 귀하의 저작물을 사용하고 있다고 생각되는 경우 여기에 설명된 절차를 따르실 수 있습니다 https://ko.player.fm/legal.

Player FM -팟 캐스트 앱

Player FM 앱으로 오프라인으로 전환하세요!

Player FM 앱으로 오프라인으로 전환하세요!

“New Report: An International Agreement to Prevent the Premature Creation of Artificial Superintelligence” by Aaron_Scher, David Abecassis, Brian Abeyta, peterbarnett

Manage episode 520129750 series 3364760

LessWrong에서 제공하는 콘텐츠입니다. 에피소드, 그래픽, 팟캐스트 설명을 포함한 모든 팟캐스트 콘텐츠는 LessWrong 또는 해당 팟캐스트 플랫폼 파트너가 직접 업로드하고 제공합니다. 누군가가 귀하의 허락 없이 귀하의 저작물을 사용하고 있다고 생각되는 경우 여기에 설명된 절차를 따르실 수 있습니다 https://ko.player.fm/legal.

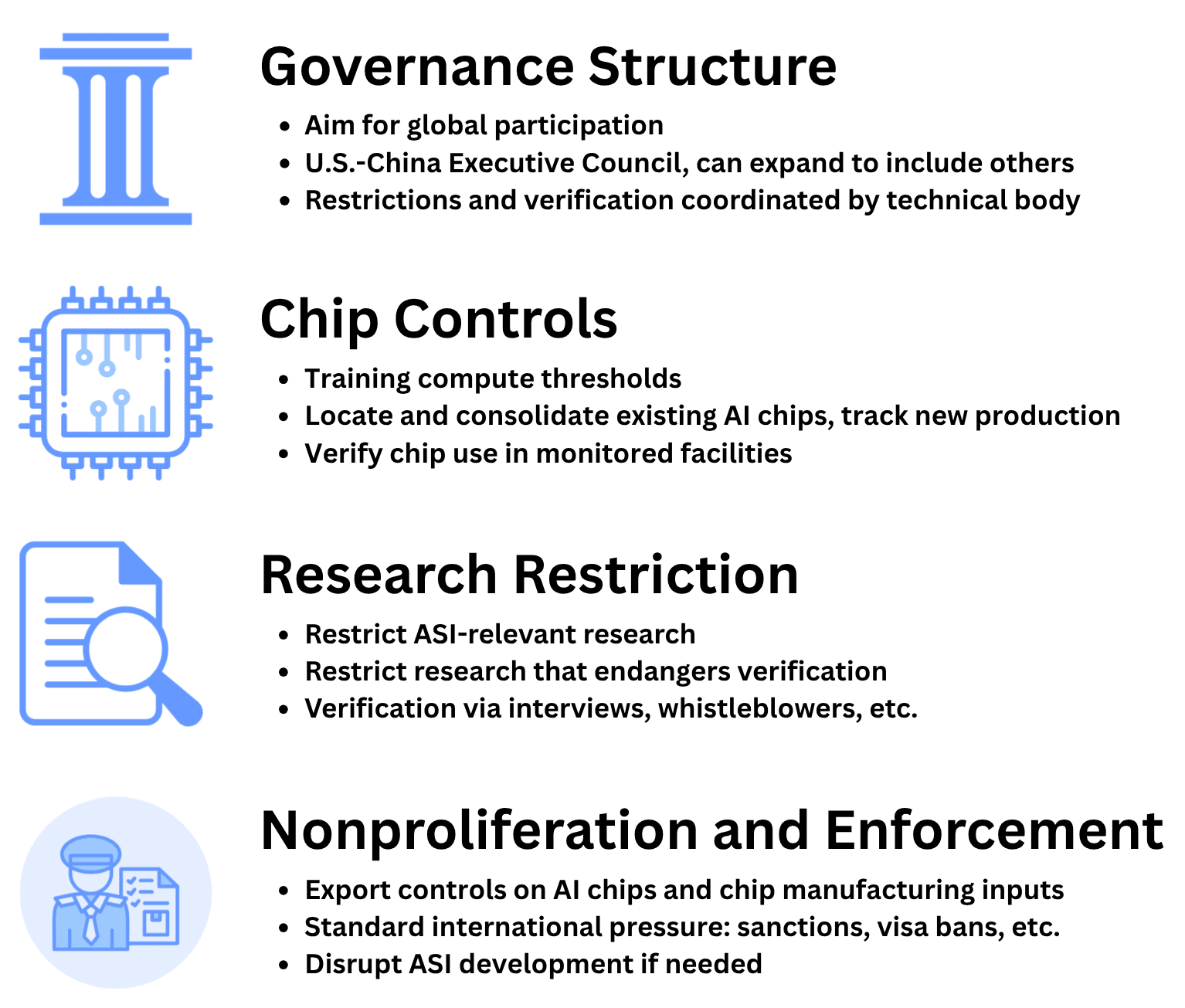

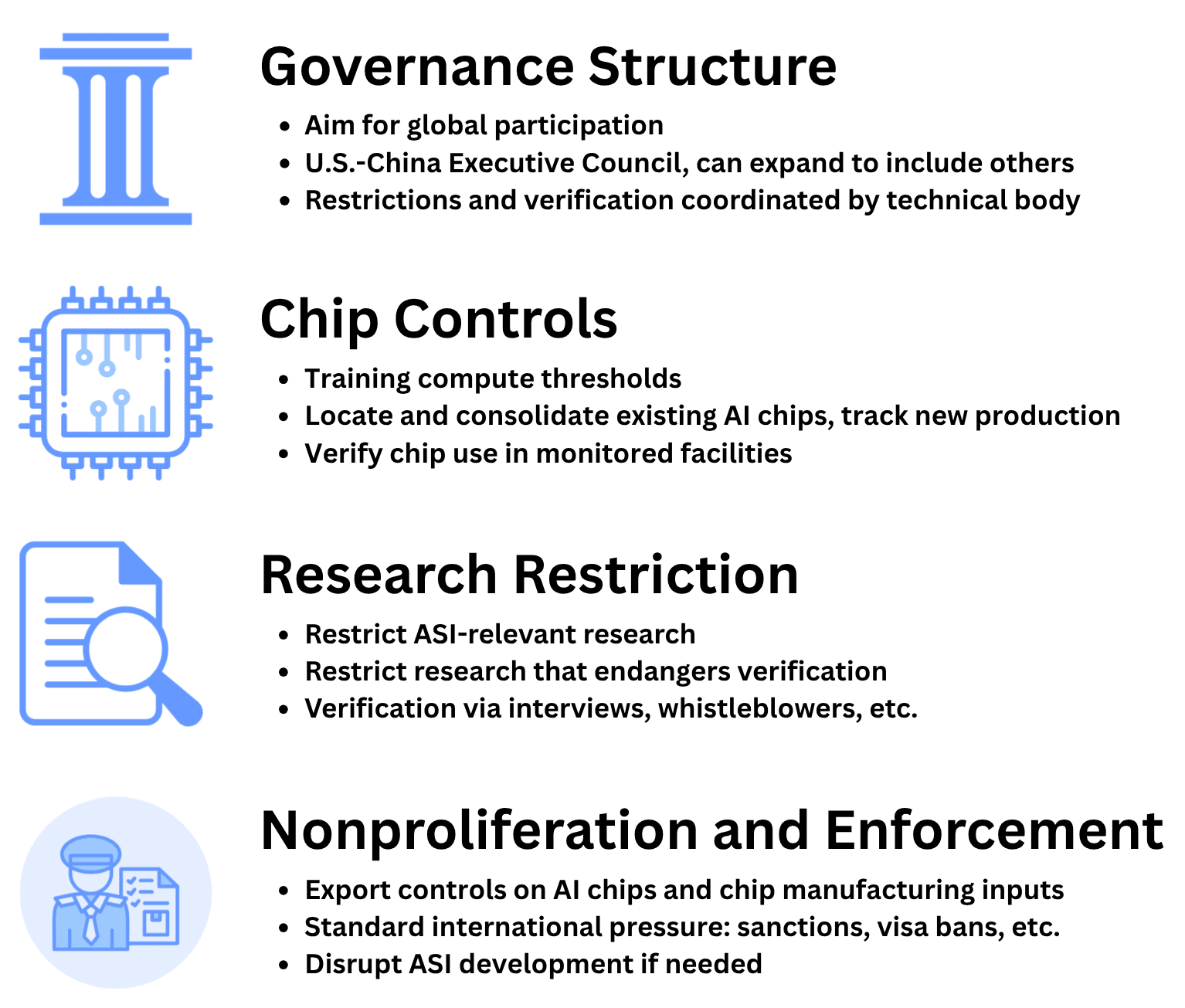

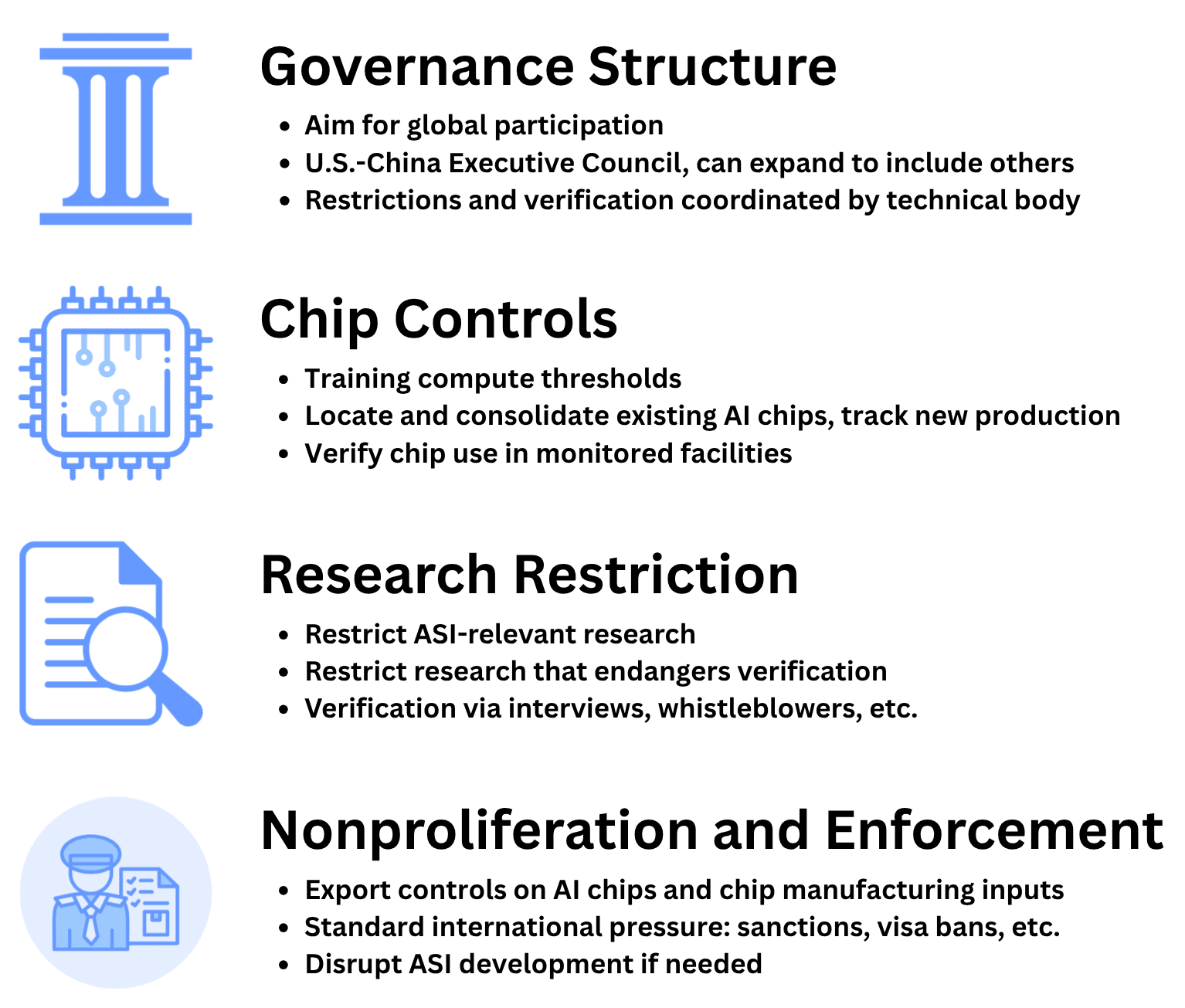

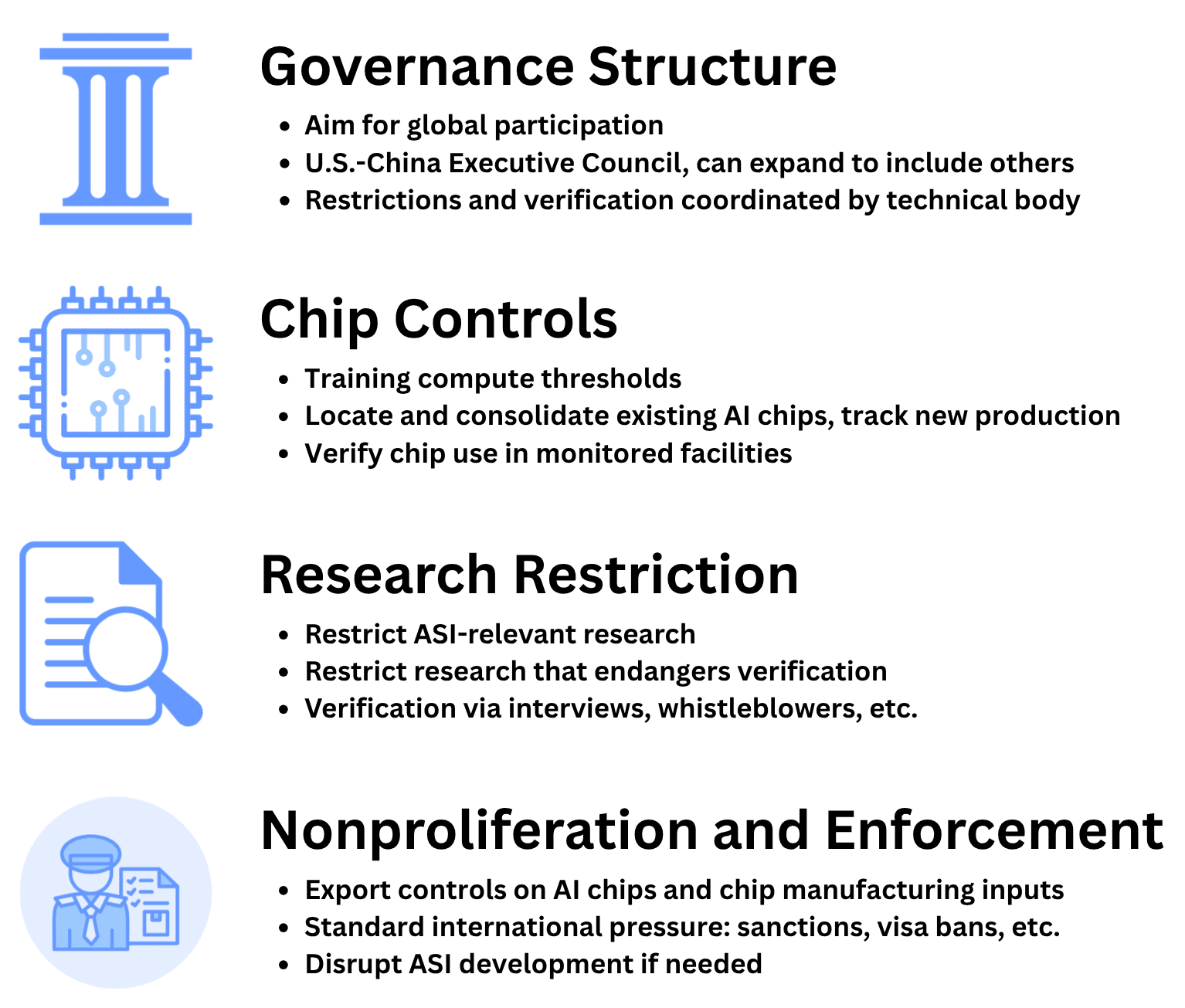

TLDR: We at the MIRI Technical Governance Team have released a report describing an example international agreement to halt the advancement towards artificial superintelligence. The agreement is centered around limiting the scale of AI training, and restricting certain AI research.

Experts argue that the premature development of artificial superintelligence (ASI) poses catastrophic risks, from misuse by malicious actors, to geopolitical instability and war, to human extinction due to misaligned AI. Regarding misalignment, Yudkowsky and Soares's NYT bestseller If Anyone Builds It, Everyone Dies argues that the world needs a strong international agreement prohibiting the development of superintelligence. This report is our attempt to lay out such an agreement in detail.

The risks stemming from misaligned AI are of special concern, widely acknowledged in the field and even by the leaders of AI companies. Unfortunately, the deep learning paradigm underpinning modern AI development seems highly prone to producing agents that are not aligned with humanity's interests. There is likely a point of no return in AI development — a point where alignment failures become unrecoverable because humans have been disempowered.

Anticipating this threshold is complicated by the possibility of a feedback loop once AI research and development can [...]

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/FA6M8MeQuQJxZyzeq/new-report-an-international-agreement-to-prevent-the

---

Narrated by TYPE III AUDIO.

---

…

continue reading

Experts argue that the premature development of artificial superintelligence (ASI) poses catastrophic risks, from misuse by malicious actors, to geopolitical instability and war, to human extinction due to misaligned AI. Regarding misalignment, Yudkowsky and Soares's NYT bestseller If Anyone Builds It, Everyone Dies argues that the world needs a strong international agreement prohibiting the development of superintelligence. This report is our attempt to lay out such an agreement in detail.

The risks stemming from misaligned AI are of special concern, widely acknowledged in the field and even by the leaders of AI companies. Unfortunately, the deep learning paradigm underpinning modern AI development seems highly prone to producing agents that are not aligned with humanity's interests. There is likely a point of no return in AI development — a point where alignment failures become unrecoverable because humans have been disempowered.

Anticipating this threshold is complicated by the possibility of a feedback loop once AI research and development can [...]

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/FA6M8MeQuQJxZyzeq/new-report-an-international-agreement-to-prevent-the

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.682 에피소드

Manage episode 520129750 series 3364760

LessWrong에서 제공하는 콘텐츠입니다. 에피소드, 그래픽, 팟캐스트 설명을 포함한 모든 팟캐스트 콘텐츠는 LessWrong 또는 해당 팟캐스트 플랫폼 파트너가 직접 업로드하고 제공합니다. 누군가가 귀하의 허락 없이 귀하의 저작물을 사용하고 있다고 생각되는 경우 여기에 설명된 절차를 따르실 수 있습니다 https://ko.player.fm/legal.

TLDR: We at the MIRI Technical Governance Team have released a report describing an example international agreement to halt the advancement towards artificial superintelligence. The agreement is centered around limiting the scale of AI training, and restricting certain AI research.

Experts argue that the premature development of artificial superintelligence (ASI) poses catastrophic risks, from misuse by malicious actors, to geopolitical instability and war, to human extinction due to misaligned AI. Regarding misalignment, Yudkowsky and Soares's NYT bestseller If Anyone Builds It, Everyone Dies argues that the world needs a strong international agreement prohibiting the development of superintelligence. This report is our attempt to lay out such an agreement in detail.

The risks stemming from misaligned AI are of special concern, widely acknowledged in the field and even by the leaders of AI companies. Unfortunately, the deep learning paradigm underpinning modern AI development seems highly prone to producing agents that are not aligned with humanity's interests. There is likely a point of no return in AI development — a point where alignment failures become unrecoverable because humans have been disempowered.

Anticipating this threshold is complicated by the possibility of a feedback loop once AI research and development can [...]

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/FA6M8MeQuQJxZyzeq/new-report-an-international-agreement-to-prevent-the

---

Narrated by TYPE III AUDIO.

---

…

continue reading

Experts argue that the premature development of artificial superintelligence (ASI) poses catastrophic risks, from misuse by malicious actors, to geopolitical instability and war, to human extinction due to misaligned AI. Regarding misalignment, Yudkowsky and Soares's NYT bestseller If Anyone Builds It, Everyone Dies argues that the world needs a strong international agreement prohibiting the development of superintelligence. This report is our attempt to lay out such an agreement in detail.

The risks stemming from misaligned AI are of special concern, widely acknowledged in the field and even by the leaders of AI companies. Unfortunately, the deep learning paradigm underpinning modern AI development seems highly prone to producing agents that are not aligned with humanity's interests. There is likely a point of no return in AI development — a point where alignment failures become unrecoverable because humans have been disempowered.

Anticipating this threshold is complicated by the possibility of a feedback loop once AI research and development can [...]

---

First published:

November 18th, 2025

Source:

https://www.lesswrong.com/posts/FA6M8MeQuQJxZyzeq/new-report-an-international-agreement-to-prevent-the

---

Narrated by TYPE III AUDIO.

---

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.682 에피소드

모든 에피소드

×플레이어 FM에 오신것을 환영합니다!

플레이어 FM은 웹에서 고품질 팟캐스트를 검색하여 지금 바로 즐길 수 있도록 합니다. 최고의 팟캐스트 앱이며 Android, iPhone 및 웹에서도 작동합니다. 장치 간 구독 동기화를 위해 가입하세요.