Player FM 앱으로 오프라인으로 전환하세요!

The new AI app stack

Manage episode 375015211 series 2385063

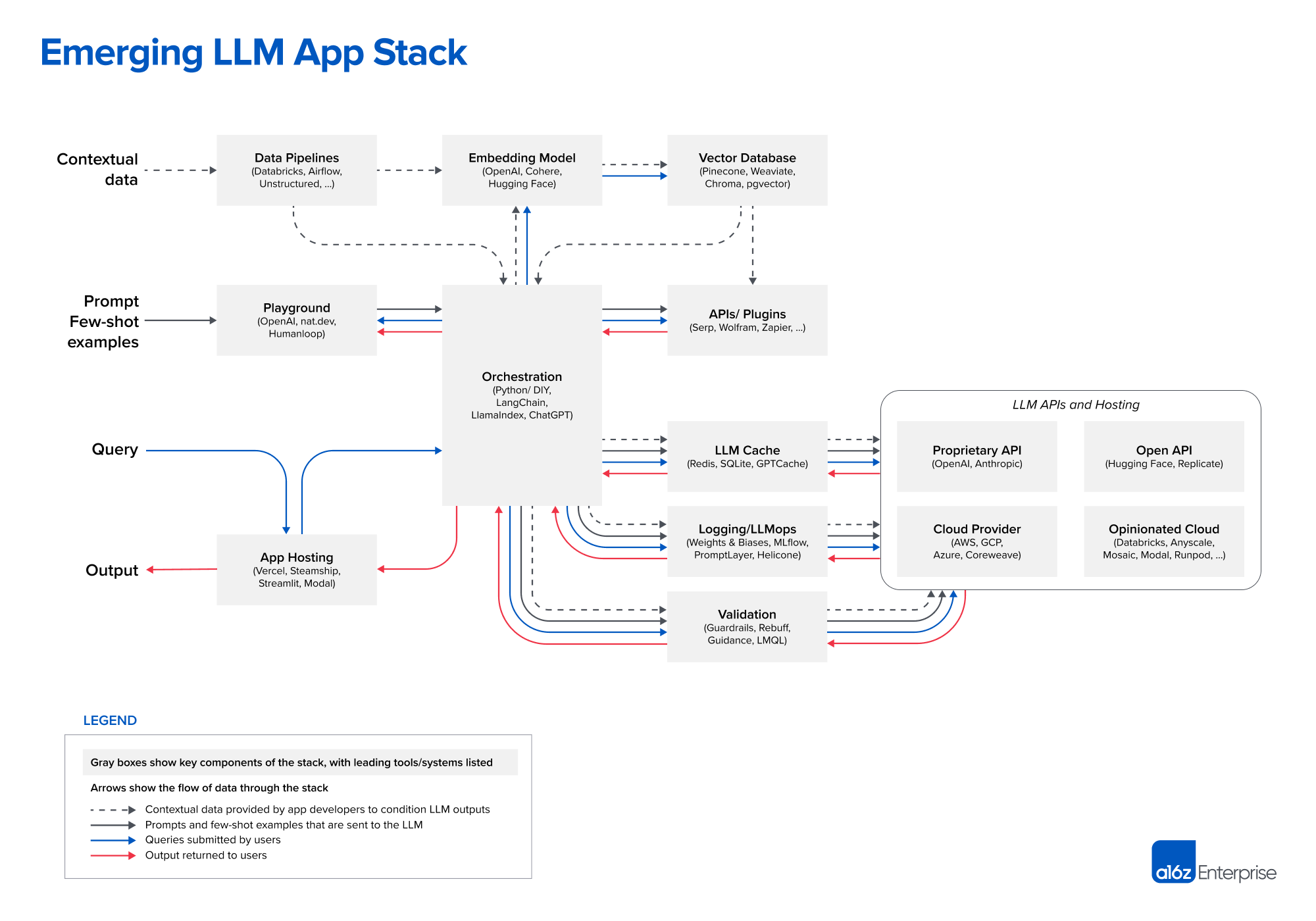

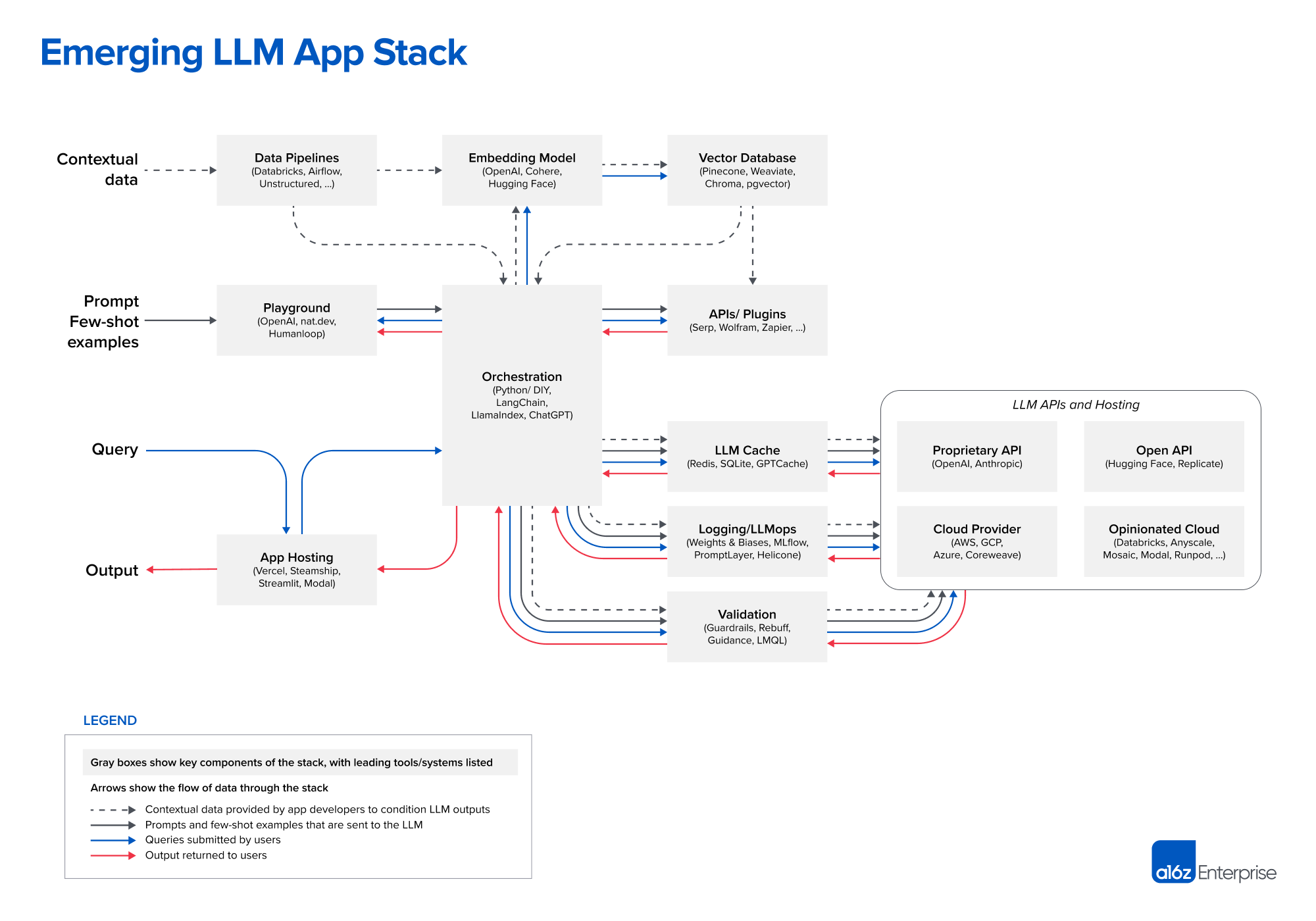

Recently a16z released a diagram showing the “Emerging Architectures for LLM Applications.” In this episode, we expand on things covered in that diagram to a more general mental model for the new AI app stack. We cover a variety of things from model “middleware” for caching and control to app orchestration.

Changelog++ members save 2 minutes on this episode because they made the ads disappear. Join today!

Sponsors:

- Fastly – Our bandwidth partner. Fastly powers fast, secure, and scalable digital experiences. Move beyond your content delivery network to their powerful edge cloud platform. Learn more at fastly.com

- Fly.io – The home of Changelog.com — Deploy your apps and databases close to your users. In minutes you can run your Ruby, Go, Node, Deno, Python, or Elixir app (and databases!) all over the world. No ops required. Learn more at fly.io/changelog and check out the speedrun in their docs.

- Typesense – Lightning fast, globally distributed Search-as-a-Service that runs in memory. You literally can’t get any faster!

- Changelog News – A podcast+newsletter combo that’s brief, entertaining & always on-point. Subscribe today.

Featuring:

Show Notes:

Emerging Architectures for LLM Applications

Something missing or broken? PRs welcome!

챕터

1. Welcome to Practical AI (00:00:07)

2. Deep dive into LLMs (00:00:43)

3. Emerging LLM app stack (00:02:25)

4. Playgrounds (00:04:35)

5. App Hosting (00:08:07)

6. Stack orchestration (00:10:46)

7. Maintenance breakdown (00:15:50)

8. Sponsor: Changelog News (00:19:08)

9. Vector databases (00:20:43)

10. Embedding models (00:22:36)

11. Benchmarks and measurements (00:24:27)

12. Data & poor architecture (00:26:59)

13. LLM logging (00:29:42)

14. Middleware Caching (00:33:01)

15. Validation (00:37:32)

16. Key takeaways (00:40:53)

17. Closing thoughts (00:42:36)

18. Outro (00:44:23)

333 에피소드

Manage episode 375015211 series 2385063

Recently a16z released a diagram showing the “Emerging Architectures for LLM Applications.” In this episode, we expand on things covered in that diagram to a more general mental model for the new AI app stack. We cover a variety of things from model “middleware” for caching and control to app orchestration.

Changelog++ members save 2 minutes on this episode because they made the ads disappear. Join today!

Sponsors:

- Fastly – Our bandwidth partner. Fastly powers fast, secure, and scalable digital experiences. Move beyond your content delivery network to their powerful edge cloud platform. Learn more at fastly.com

- Fly.io – The home of Changelog.com — Deploy your apps and databases close to your users. In minutes you can run your Ruby, Go, Node, Deno, Python, or Elixir app (and databases!) all over the world. No ops required. Learn more at fly.io/changelog and check out the speedrun in their docs.

- Typesense – Lightning fast, globally distributed Search-as-a-Service that runs in memory. You literally can’t get any faster!

- Changelog News – A podcast+newsletter combo that’s brief, entertaining & always on-point. Subscribe today.

Featuring:

Show Notes:

Emerging Architectures for LLM Applications

Something missing or broken? PRs welcome!

챕터

1. Welcome to Practical AI (00:00:07)

2. Deep dive into LLMs (00:00:43)

3. Emerging LLM app stack (00:02:25)

4. Playgrounds (00:04:35)

5. App Hosting (00:08:07)

6. Stack orchestration (00:10:46)

7. Maintenance breakdown (00:15:50)

8. Sponsor: Changelog News (00:19:08)

9. Vector databases (00:20:43)

10. Embedding models (00:22:36)

11. Benchmarks and measurements (00:24:27)

12. Data & poor architecture (00:26:59)

13. LLM logging (00:29:42)

14. Middleware Caching (00:33:01)

15. Validation (00:37:32)

16. Key takeaways (00:40:53)

17. Closing thoughts (00:42:36)

18. Outro (00:44:23)

333 에피소드

All episodes

×플레이어 FM에 오신것을 환영합니다!

플레이어 FM은 웹에서 고품질 팟캐스트를 검색하여 지금 바로 즐길 수 있도록 합니다. 최고의 팟캐스트 앱이며 Android, iPhone 및 웹에서도 작동합니다. 장치 간 구독 동기화를 위해 가입하세요.