“Towards a Typology of Strange LLM Chains-of-Thought” by 1a3orn

Manage episode 512930004 series 3364758

LessWrong에서 제공하는 콘텐츠입니다. 에피소드, 그래픽, 팟캐스트 설명을 포함한 모든 팟캐스트 콘텐츠는 LessWrong 또는 해당 팟캐스트 플랫폼 파트너가 직접 업로드하고 제공합니다. 누군가가 귀하의 허락 없이 귀하의 저작물을 사용하고 있다고 생각되는 경우 여기에 설명된 절차를 따르실 수 있습니다 https://ko.player.fm/legal.

Intro

LLMs being trained with RLVR (Reinforcement Learning from Verifiable Rewards) start off with a 'chain-of-thought' (CoT) in whatever language the LLM was originally trained on. But after a long period of training, the CoT sometimes starts to look very weird; to resemble no human language; or even to grow completely unintelligible.

Why might this happen?

I've seen a lot of speculation about why. But a lot of this speculation narrows too quickly, to just one or two hypotheses. My intent is also to speculate, but more broadly.

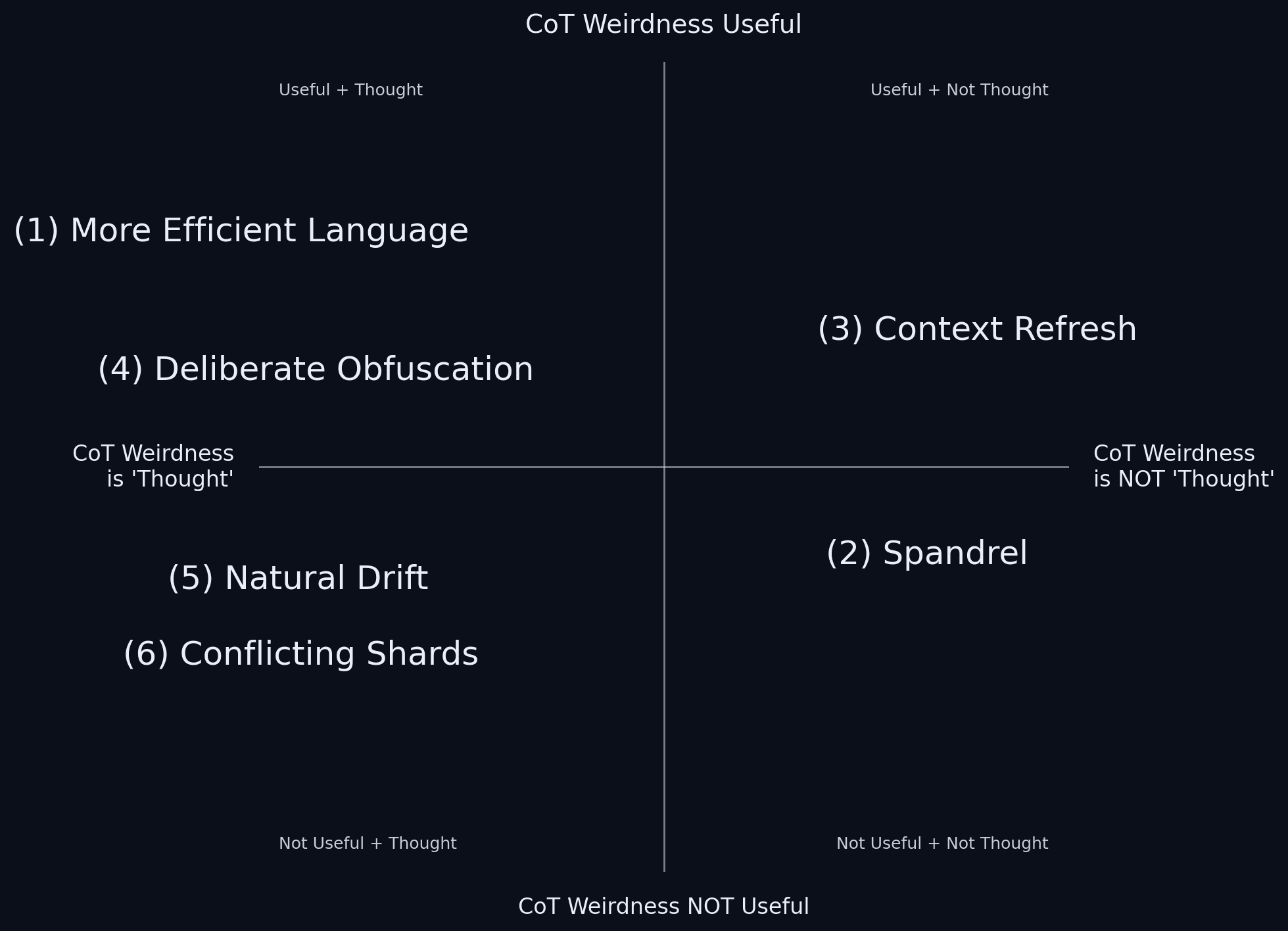

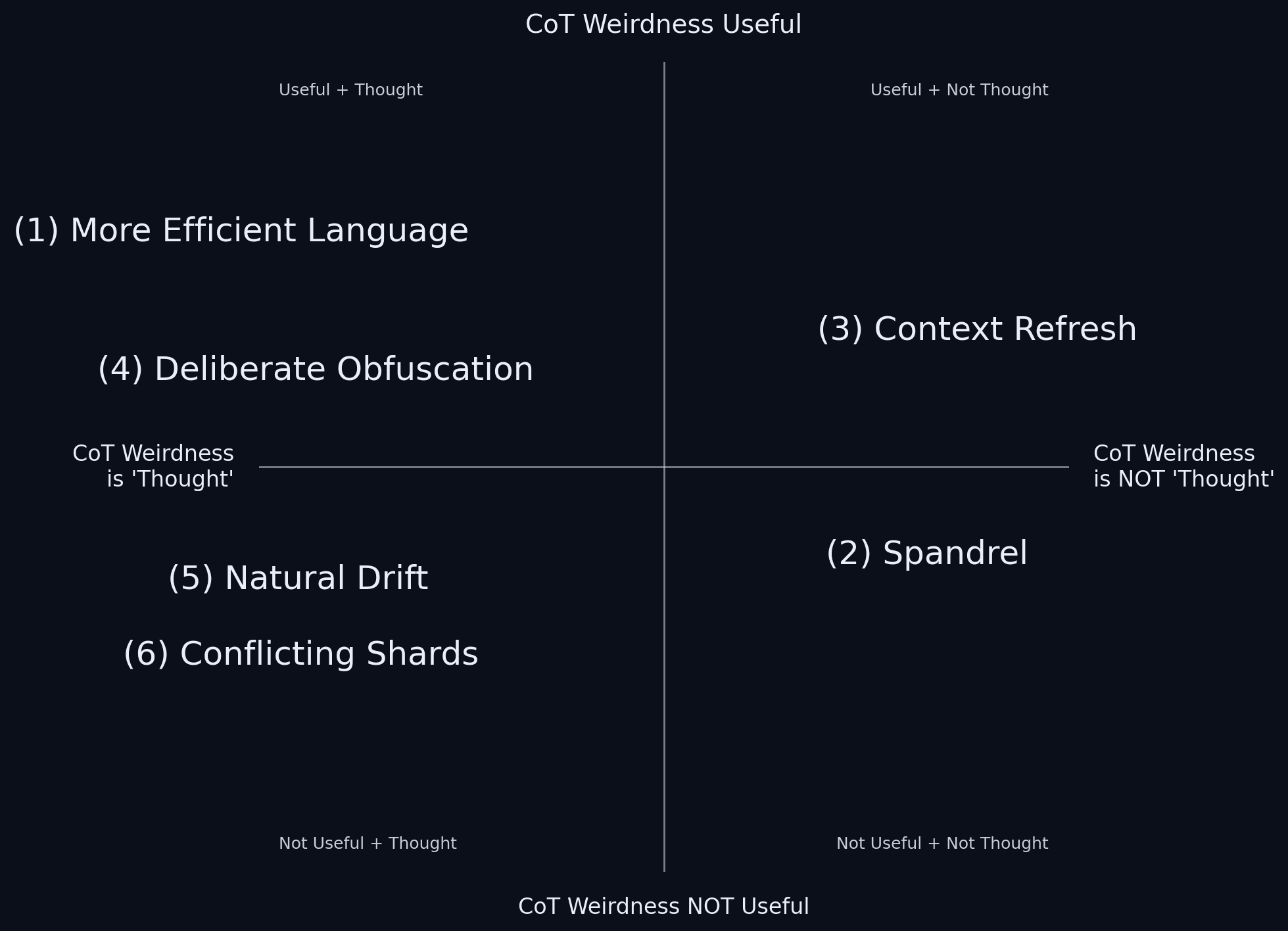

Specifically, I want to outline six nonexclusive possible causes for the weird tokens: new better language, spandrels, context refresh, deliberate obfuscation, natural drift, and conflicting shards.

And I also wish to extremely roughly outline ideas for experiments and evidence that could help us distinguish these causes.

I'm sure I'm not enumerating the full space of [...]

---

Outline:

(00:11) Intro

(01:34) 1. New Better Language

(04:06) 2. Spandrels

(06:42) 3. Context Refresh

(10:48) 4. Deliberate Obfuscation

(12:36) 5. Natural Drift

(13:42) 6. Conflicting Shards

(15:24) Conclusion

---

First published:

October 9th, 2025

Source:

https://www.lesswrong.com/posts/qgvSMwRrdqoDMJJnD/towards-a-typology-of-strange-llm-chains-of-thought

---

Narrated by TYPE III AUDIO.

---

…

continue reading

LLMs being trained with RLVR (Reinforcement Learning from Verifiable Rewards) start off with a 'chain-of-thought' (CoT) in whatever language the LLM was originally trained on. But after a long period of training, the CoT sometimes starts to look very weird; to resemble no human language; or even to grow completely unintelligible.

Why might this happen?

I've seen a lot of speculation about why. But a lot of this speculation narrows too quickly, to just one or two hypotheses. My intent is also to speculate, but more broadly.

Specifically, I want to outline six nonexclusive possible causes for the weird tokens: new better language, spandrels, context refresh, deliberate obfuscation, natural drift, and conflicting shards.

And I also wish to extremely roughly outline ideas for experiments and evidence that could help us distinguish these causes.

I'm sure I'm not enumerating the full space of [...]

---

Outline:

(00:11) Intro

(01:34) 1. New Better Language

(04:06) 2. Spandrels

(06:42) 3. Context Refresh

(10:48) 4. Deliberate Obfuscation

(12:36) 5. Natural Drift

(13:42) 6. Conflicting Shards

(15:24) Conclusion

---

First published:

October 9th, 2025

Source:

https://www.lesswrong.com/posts/qgvSMwRrdqoDMJJnD/towards-a-typology-of-strange-llm-chains-of-thought

---

Narrated by TYPE III AUDIO.

---

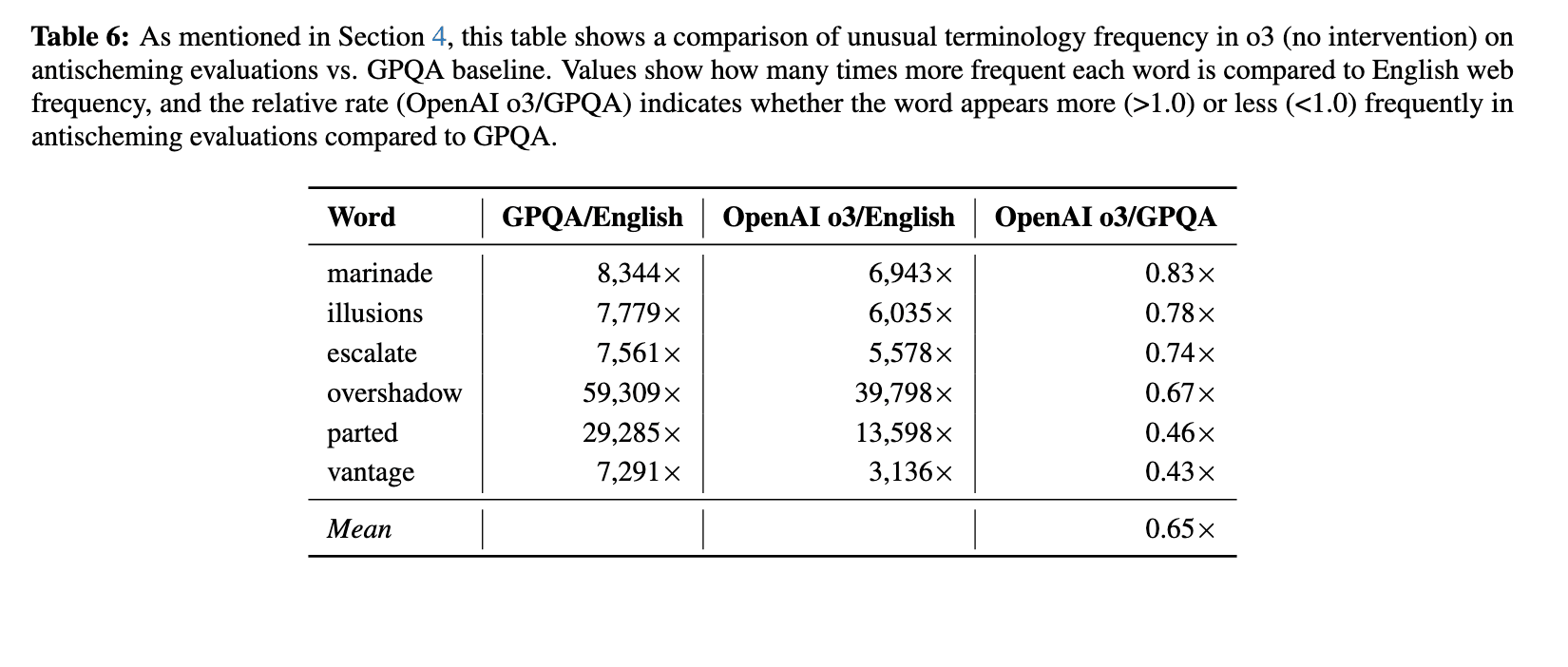

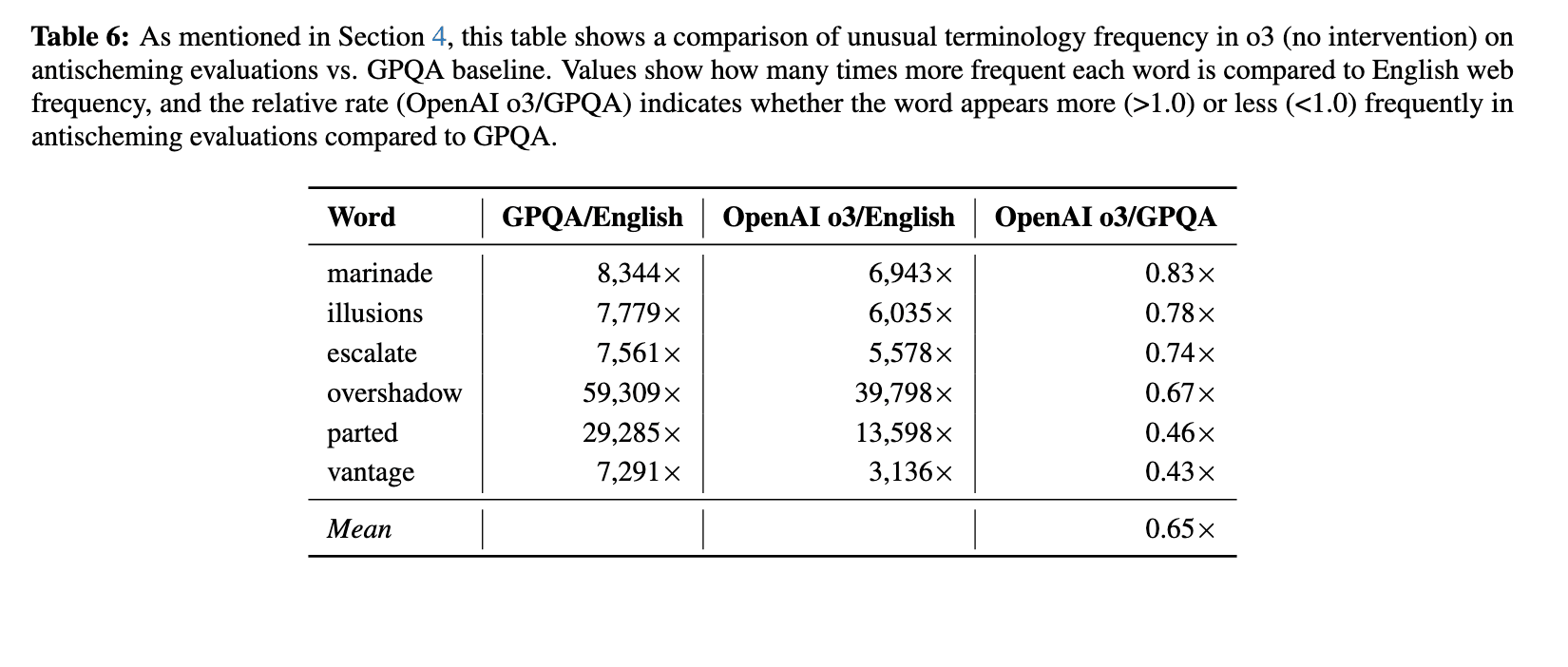

Images from the article:

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.

Apple Podcasts and Spotify do not show images in the episode description. Try Pocket Casts, or another podcast app.637 에피소드